Address

Nicosia, Cyprus

Charitinis Sakkada 5, 1040

Contact Information

info@catalink.eu

+357 22 263921

MuseMeUp is an innovative application that captures your emotional state using emotion recognition analysis and heart activity data. These signals are processed in real-time by AI models and enriched with cultural semantics through a Knowledge Graph. The result is a unique music composition, tailored to your experience.

MuseMeUp was developed as part of the MuseIT project, whose mission was to create multi-sensory, inclusive, and user-centered cultural experiences through innovative interactive technologies. Even though MuseMeUp was originally designed for use in museums and cultural institutions, its potential goes far beyond: any setting where emotion and context matter can benefit from this fusion of technology, art, and human experience.

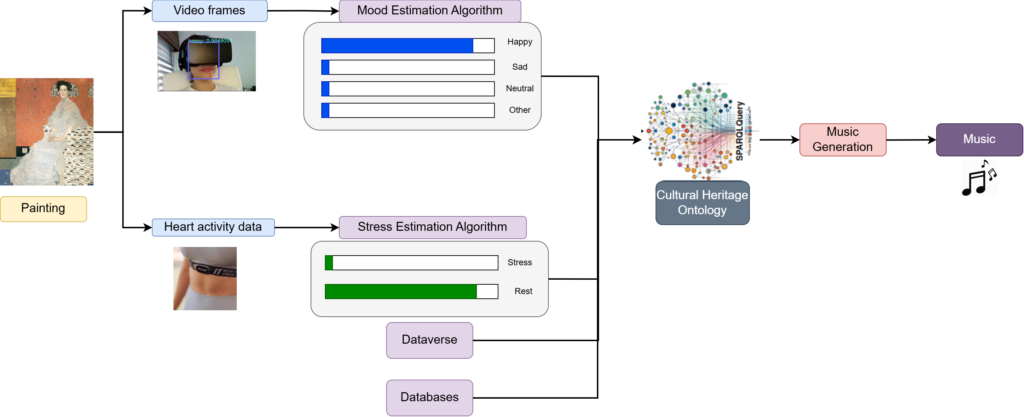

MuseMeUp brings together four main components:

The diagram depicts how the four main components interact with each other. While the user interacts with a cultural asset, their facial expressions and heart activity data are analysed by the Mood Estimation and Stress Estimation algorithms, respectively. The results are then combined with the cultural context data retrieved from the Knowledge Graph. All inputs are fed in the Music Generation component, where a piece of music tailored to the user’s emotional state and experience is synthesized and played.

The Mood Estimation component analyzes your facial expressions through webcam input to estimate your current emotional state in real time. The backbone of the service is a Convolutional Neural Network (CNN) model, specifically mini-Xception [1], trained on a custom dataset.

Stress Estimation service assesses your stress levels by analyzing your electrocardiogram (ECG), recorded through a Polar H10 chest strap. ECG is one of the simplest and fastest ways to record the heart’s electrical activity. Specifically, an ECG shows how fast the heart is beating, whether the rhythm is steady or irregular, and, physiologically speaking, how the electrical signals travel through the heart’s chambers [2]. ECG signal is proven to be a reliable indicator of stress states [3, 4], making it ideal for this application.

At the service’s core lies a one-dimensional CNN model, trained using a self-supervised learning technique. The model was trained on a custom dataset, collected through dedicated workshops.

To enrich the physiological metrics with cultural context, we built a dedicated Cultural Heritage Knowledge Graph (KG). This KG stores structured metadata about each artifact a user interacts with, such as its creator, time period, keywords, description and more.

The first step was to create the ontology using Protégé. The designed ontology included terms from other high-level ontologies like Dublin Core and FOAF to ensure interoperability. We then stored it in Ontotext’s GraphDB triplestore and populated it with data provided by partners during the MuseIT project. The population of the graph was carried out through Catalink’s CASPAR, a tool that continuously ingests incoming data, converts them into RDF based on the ontology, and sends them to the triplestore. This setup enables us to run SPARQL queries over the data, allowing for flexible, real-time retrieval of cultural context during user interaction.

More details about the Semantics behind MuseMeUp, can be found in the dedicated blog post. Also, experiments using this kind of data—such as a recommendation system built on top of the graph—can also be found here.

The Music Generation Component produces personalized musical pieces by applying music theory rules that link specific musical elements to emotional responses and cultural context.

The generated music is influenced by two main factors. First the emotional tone of the music is shaped by the user’s mood. For example, if the model detects mostly happy expressions, the music follows a major key with uplifting characteristics. For sadder moods, the music shifts to a minor key, producing a more melancholic feel.

Second, the cultural context of the artifacts influences the musical style. For example, if the user explored a modern art artifact, the music might include electrical instruments, or if the artifacts are from the Ancient period, the composition may use instruments like the lyre or aulos. By blending these two aspects—emotion and cultural context—the result is a unique, personalized musical piece.

It all starts while you engage with a cultural artifact (for example, a painting or video). During this interaction your facial expressions and heart activity are recorded. Our AI models analyze this data to detect your mood and stress levels in real-time. Meanwhile, this data is enriched with context from the Cultural Heritage Knowledge Graph, which adds contextual information like keywords, historical period, and themes based on what you explore. Finally, a piece of music tailored to your emotional state and experience is synthesized and played.

The MuseMeUp setup is relatively simple. The only equipment needed is a PC or laptop with a built-in or external webcam and a PolarH10 chest strap sensor.

To explore a simplified version of MuseMeUp from the comfort of your home, using only a webcam, you can navigate to the MuseIT website https://museit.eu/landing-page.

Here is a step-by-step guide to run MuseMeUp:

First of all, login to the MuseIT website using your credentials. Then, select the option ‘Explore Artefacts’. This page includes a collection of cultural artifacts, from paintings, to historical maps and traditional attires. You can easily navigate through the collection of artifacts by scrolling through the available pages. Also, you can filter the displayed artifacts by adding relevant filters. To use the MuseMeUp application, click on the green camera button, located next to the filters search bar. This button triggers the mood estimation app, which captures your facial expressions and detects your emotion. While the camera is recording, you can explore the collection by clicking on the artifacts of your interest. When you’re done exploring, click the red ‘Stop’ button to stop the recording. After a few seconds, a music piece personalized on your experience will be generated, and will be available for download! To download it, simply click on the blue ‘download’ button.

Collections page of MuseIT website. You can explore the cultural asset collection by scrolling through the available pages. To start the MuseMeUp experience, click the green camera button next to the filter search bar. This activates the mood estimation feature, which captures your facial expressions and detects your emotional state. Once you’re done exploring, click the red “Stop” button to end the recording.

After a few seconds, your personalized music will be ready. Just click the blue “Download” button, also located near the filter search bar, to download and listen to your generated piece of music.

MuseMeUp enhances the way people experience cultural heritage, making it more inclusive and engaging.

User interacting with MuseMeUp during a workshop, as part of the MuseIT project.

Have questions? Reach out to us for further information.

Acknowledgement: This application is part of the MuseIT’s mission to create multi-sensory, inclusive, and user-centered cultural experiences through innovative interactive technologies. This project is funded by the European Union.

References

[1] Arriaga, Octavio, Matias Valdenegro-Toro, and Paul Plöger. “Real-time convolutional neural networks for emotion and gender classification.” arXiv preprint arXiv:1710.07557 (2017).

[2] Johns Hopkins Medicine. 2025. “Electrocardiogram.” Johns Hopkins Medicine. Accessed July 14, 2025. https://www.hopkinsmedicine.org/health/treatment-tests-and-therapies/electrocardiogram.

[3] Sharma, Nandita, and Tom Gedeon. “Objective measures, sensors and computational techniques for stress recognition and classification: A survey.” Computer methods and programs in biomedicine 108, no. 3 (2012): 1287-1301.

[4] Laborde, Sylvain, Emma Mosley, and Julian F. Thayer. “Heart rate variability and cardiac vagal tone in psychophysiological research–recommendations for experiment planning, data analysis, and data reporting.” Frontiers in psychology 8 (2017): 213.