Address

Nicosia, Cyprus

Charitinis Sakkada 5, 1040

Contact Information

info@catalink.eu

+357 22 263921

Have you ever wished you could express your emotions without saying a word?

Or wondered what it would be like to listen to your heart — not metaphorically, but literally?

At Catalink, we developed MuseMeUp as part of the MuseIT project: a tool that transforms your biophysiological signals into personalized music, in real time. It blends semantic technologies, AI models, and cultural knowledge to create sound that resonates with both your emotions and what you’re engaging with.

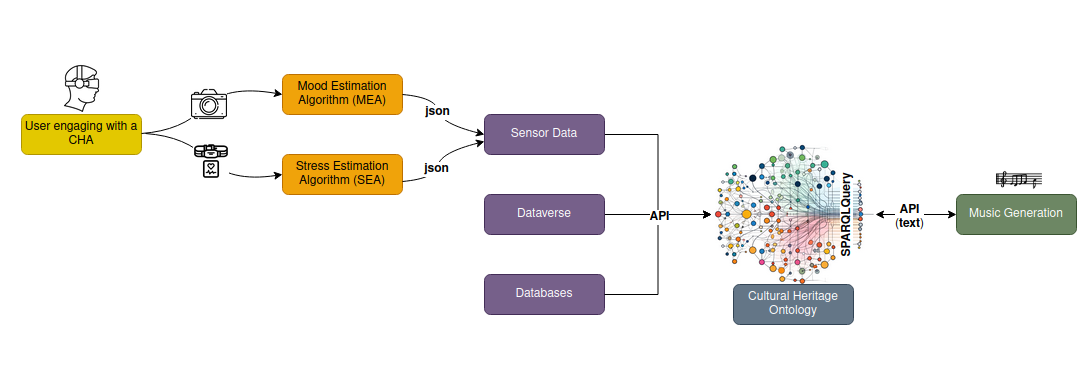

The pipeline

Your facial expressions and heart rate are captured and analyzed by AI models to detect your emotional and stress state, then structured in our Sensor Ontology. At the same time, the system queries the Cultural Heritage Knowledge Graph to retrieve metadata about the artifact you’re engaging with.

These two streams — your inner state and the artifact’s context — are then combined to guide the music generation engine.

The result? A personalized composition where musical features like instruments, tempo, harmony, and style adapt to both your emotional state and the cultural context of what you’re experiencing.

Figure 1. Overview of the MuseMeUp pipeline for generating personalized music from emotional and cultural data.

The Power of Semantic Technologies

At the heart of MuseMeUp is a semantic infrastructure that connects emotions, culture, and music in a meaningful, structured way.

We’ve developed and used two key ontologies:

These two ontologies are brought together through semantic links, using constructs like owl:sameAs to connect user-engaged artifacts with their equivalents in the Cultural Heritage Knowledge Graph. This enables MuseMeUp to reason across domains, enrich the experience with linked data, and support real-time, context-aware interaction.

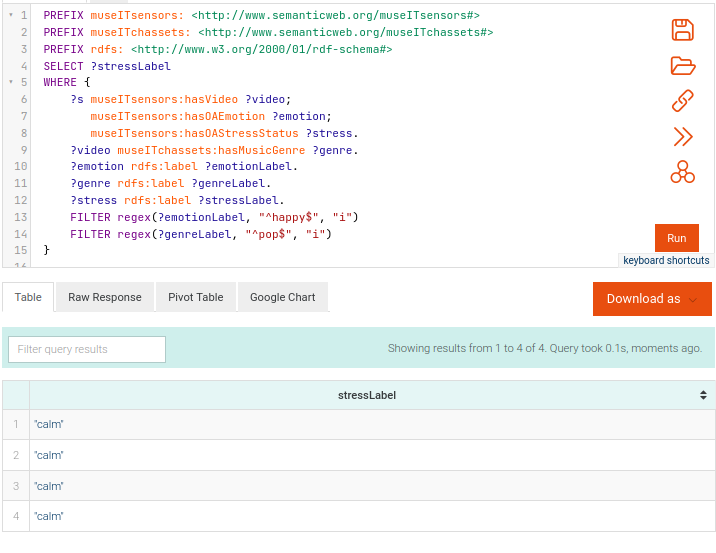

Examples of Semantic Queries

Semantic technologies allow us to query, connect, and reason over heterogeneous data in ways that go far beyond simple metadata lookups. Here are two real examples showing how SPARQL can be used to extract meaningful insights by combining emotional states, cultural themes, and linked concepts:

Figure 2. SPARQL query and result table for identifying the most frequent emotion associated with the keyword “classical music” and stress level “calm”.

Figure 3. SPARQL query and result table for identifying the stress level associated with assets that have the genre “pop” and where users expressed “happy” emotions.

Through these queries, MuseMeUp ensures personalized music resonates meaningfully with your emotional state and cultural engagement.

Have questions? Reach out to us for further information.